Sussex-Huawei Locomotion Challenge 2026

The Sussex-Huawei Locomotion Dataset [1-2] will be used in an activity recognition challenge with results to be presented at the HASCA Workshop at Ubicomp 2026.

This eighth edition of the challenge follows on our very successful 2018, 2019, 2020, 2021, 2023, 2024 and 2025 challenges, which saw the participation of more than 140 teams and 300 researchers [4-9].

Motivated by the growing interest in foundation models, particularly large language models, this year’s edition focuses on their application to transportation mode recognition. The goal is to accurately recognize eight modes of locomotion and transportation in a user-independent manner. In particular, the challenge aims to explore novel ways of adapting, combining, and interfacing foundation models with sensor data to improve recognition performance.

Participants are required to design a complete pipeline that leverages existing pre-trained foundation models to process sensor data and predict activity labels. In the 2025 Challenge, some foundation models have been initially explored for transportation mode recognition, including time-series models such as MOMENT and Chronos, vision models such as Flamingo, and language models such as BERT, used in either pre-trained or retrained settings. This year’s challenge particularly encourages the exploration of new or underutilized foundation models, especially those not investigated in previous editions. Foundation models must be used in a frozen manner, i.e., without any fine-tuning or retraining. However, participants may train lightweight, task-specific components (e.g., classification heads) on top of frozen foundation models, without updating the model parameters.

The provided dataset includes training, validation, and testing sets. Each submission should implement an end-to-end algorithmic pipeline that utilizes foundation models to process sensor data, build predictive models, and output the recognized activities. Participants are also required to submit a technical paper describing their methodology and development process. Accepted contributions will be presented at a special session of the HASCA Workshop at Ubicomp 2026 and included in the adjunct proceedings.

Deadlines

- Registration via email: as soon as possible, but not later than 30.05.2026

- Challenge duration: 10.05.2026 – 30.06.2026

- Submission deadline: 30.06.2026

- HASCA-SHL paper submission: 04.07.2026

- HASCA-SHL review notification: 15.07.2026

- HASCA-SHL camera ready submission: 22.07.2026

- UbiComp early-bird registration: TBD

- HASCA workshop: 11-12 October (TBC), 2026 in Shanghai, China

- Release of the ground-truth of the test data: TBD

Registration

Each team should send a registration email to shldataset.challenge@gmail.com as soon as possible but not later than 15.05.2026, stating the:

- The name of the team

- The names of the participants in the team

- The organization/company (individuals are also encouraged)

- The contact person with email address

HASCA Workshop

To be part of the final ranking, participants will be required to submit a detailed paper to the HASCA workshop. The paper should contain technical description of the processing pipeline, the algorithms and the results achieved during the development/training phase. The submissions must follow the HASCA format, but with a page limit between 3 and 6 pages.

Prizes

- 800 £

- 400 £

- 200 £

Dataset and format

The data is divided into three parts: train, validate and test. The data comprises of 59 days of training data, 6 days of validation data and 28 days of test data. The train, validation and test data was generated by segmenting the whole data with a non-overlap sliding window of 5 seconds.

The train data contains the raw sensors data from one user (user 1) and four phone locations (bag, hips, torso, hand). It also includes the activity labels (class label). The train data contains four sub-directories (Bag, Hips, Torso, Hand) with the following files in each sub-directory:

- Acc_*.txt (with * being x, y, or z): acceleration

- Gyr_*.txt: rate of turn

- Magn_*.txt: magnetic field

- Label.txt: activity classes.

Each file contains 196072 lines x 500 columns, corresponding to 196072 frames each containing 500 samples (5 seconds at the sampling rate 100 Hz). The frames for the train data are consecutive in time. The validation data contains the raw sensors data from the other two users (mixing user 2 and use 3) and four phone locations (bag, hips, torso, hand), with the same files as the train dataset. Each file in the validation data contains 28789 lines x 500 columns, corresponding to 28789 frames each containing 500 samples (5 seconds at the sampling rate 100 Hz). In each data frame, one or multiple sensor modalities are randomly missing by setting the sensor data to zero. The validation data is extracted from the already released preview of the SHL dataset.

The test data comprises contains the raw sensors data from the other two users (mixing user 2 and use 3) and three phone locations (bag, hips, torso), with the same files as the train dataset but no class label. In each data frame, one or multiple sensor modalities are randomly missing by setting the sensor data to zero. This is the data on which ML predictions must be made. The files contain 92726 lines x 500 columns (5 seconds at the sampling rate 100 Hz). In order to challenge the real time performance of the classification, the frames are shuffled, i.e. two successive frames in the file are likely not consecutive in time. ————————–

Downloads

Download train data

- Traininig set – Bag (3.0 GB)

- Traininig set – Hand (3.2 GB)

- Traininig set – Hips (3.1 GB)

- Traininig set – Torso (3.0 GB)

Download validation data

Download test data

Download example submission

Submission of predictions on the test dataset

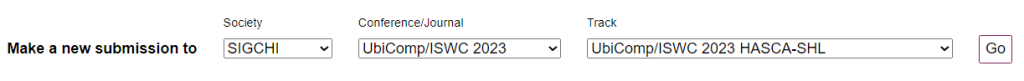

The participants should submit a plain text prediction file (e.g. “teamName_predictions.txt), containing the a matrix of size 92726 lines x 500 columns corresponding to each sample in the testing dataset. An example of submission is available. The participants’ predictions should be submitted online by sending an email to shldataset.challenge@gmail.com, in which there should be a link to the predictions file, using services such as Dropbox, Google Drive, etc. In case the participants cannot provide links using some file sharing service, they should contact the organizers via email shldataset.challenge@gmail.com, which will provide an alternate way to send the data. To be part of the final ranking, participants will be required to publish a detailed paper in the proceedings of the HASCA workshop. The date for the paper submission is 60.06.2026. All the papers must be formatted using the ACM SIGCHI Master Article template with 2 columns. The template is available at TEMPLATES ISWC/UBICOMP2026. Submissions do not need to be anonymous.Submission is electronic, using precision submission system. The submission site is open at https://new.precisionconference.com/submissions (select SIGCHI / UbiComp 2025 / UbiComp 2026 Workshop – HASCA-SHL and push Go button). See the image below.

Rules

Some of the main rules are listed below. These rules apply to both tasks, unless differently specified under each task’s section. The detailed rules are contained in the following document.

- Eligibility

- You do not work in or collaborate with the SHL project (http://www.shl-dataset.org/);

- If you submit an entry, but are not qualified to enter the contest, this entry is voluntary. The organizers reserve the right to evaluate it for scientific purposes. If you are not qualified to submit a contest entry and still choose to submit one, under no circumstances will such entries qualify for sponsored prizes.

- Entry

- Registration (see above): as soon as possible but not later than 30.05.2026.

- Challenge: Participants will submit prediction results on test data.

- Workshop paper: To be part of the final ranking, participants will be required to publish a detailed paper in the proceedings of the HASCA workshop (http://hasca2026.hasc.jp/); The dates will be set during the competition.

- Submission: The participants’ predictions should be submitted online by sending an email to shldataset.challenge@gmail.com, in which there should be a link to the predictions file, using services such as Dropbox, Google Drive, etc. In case the participants cannot provide link using some file sharing service, they should contact the organizers via email shldataset.challenge@gmail.com, which will provide an alternate way to send the data.

- A single submission is allowed per team. The same person cannot be in multiple teams, except if that person is a supervisor. The number of supervisors is limited to 3 per team. One supervisor can cover up to 3 teams.

- Evaluation

- The challenge this year will explore the application of foundation models to transportation mode recognition. The rank of the submission will be mainly based on the performance on the testing data.

Q&A

Contact

All inquiries should be directed to: shldataset.challenge@gmail.com

Organizers

- Prof. Daniel Roggen, University of Sussex (UK)

- Dr. Lin Wang, Queen Mary University of London (UK)

- Dr. Paula Lago, Concordia University in Montreal (CA)

- Prof. Hristijan Gjoreski, Ss. Cyril and Methodius University (MK)

- Dr. Kazuya Murao, Ritsumeikan University (JP)

- Dr. Tsuyoshi Okita, Kyushu Institute of Technology (JP)

References

[1] H. Gjoreski, M. Ciliberto, L. Wang, F.J.O. Morales, S. Mekki, S. Valentin, and D. Roggen, “The University of Sussex-Huawei locomotion and transportation dataset for multimodal analytics with mobile devices,” IEEE Access 6 (2018): 42592-42604. [DATASET INTRODUCTION]

[2] L. Wang, H. Gjoreski, M. Ciliberto, S. Mekki, S. Valentin, and D. Roggen, “Enabling reproducible research in sensor-based transportation mode recognition with the Sussex-Huawei dataset,” IEEE Access 7 (2019): 10870-10891. [ DATASET ANALYSIS ]

[3] L. Wang, H. Gjoreski, M. Ciliberto, S. Mekki, S. Valentin, and D. Roggen, “Benchmarking the SHL recognition challenge with classical and deep-learning pipelines,” in Proceedings of the 2018 ACM International Joint Conference and 2018 International Symposium on Pervasive and Ubiquitous Computing and Wearable Computers, pp. 1626-1635, 2018. [BASELINE FOR MOTION SENSORS]

[4] L. Wang, H. Gjoreski, M Ciliberto, P. Lago, K. Murao, T. Okita, D. Roggen, “Three-Year review of the 2018–2020 SHL challenge on transportation and locomotion mode recognition from mobile sensors,” Frontiers in Computer Science, 3(713719): 1-24, Sep. 2021. [SHL 2018-2020 SUMMARY][Motion]

[5] L. Wang, M. Ciliberto, H. Gjoreski, P. Lago, K. Murao, T. Okita, and D. Roggen, “Locomotion and transportation mode recognition from GPS and radio signals: Summary of SHL challenge 2021,” Adjunct Proc. 2021 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proc. 2021 ACM International Symposium on Wearable Computers (UbiComp’ 21), 412-422, Virtual Event, 2021. [SHL 2021 SUMMARY][GPS+Radio]

[6] L. Wang, M. Ciliberto, H. Gjoreski, P. Lago, K. Murao, T. Okita, and D. Roggen, “Summary of SHL challenge 2023: Recognizing locomotion and transportation mode from GPS and motion sensors,” Adjunct Proc. 2023 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proc. 2023 ACM International Symposium on Wearable Computers (UbiComp’ 23), 575-585, Mexico, 2023. [SHL 2023 SUMMARY][Motion+GPS]

[7] L. Wang, M. Ciliberto, H. Gjoreski, P. Lago, K. Murao, T. Okita, and D. Roggen, “Summary of SHL challenge 2024: Motion sensor-based locomotion and transportation mode recognition in missing data scenarios,” UbiComp ’24: Companion of the 2024 on ACM International Joint Conference on Pervasive and Ubiquitous Computing , 555-562, Melbourne, Australia, Oct. 2024. [SHL 2024 SUMMARY][Motion]

[8] L. Wang, M. Ciliberto, H. Gjoreski, P. Lago, K. Murao, T. Okita, and D. Roggen, “Summary of SHL challenge 2025: Locomotion and transportation mode recognition using foundation models,” UbiComp ’25: Companion of the 2025 ACM International Joint Conference on Pervasive and Ubiquitous Computing , 1074-1081, Espoo, Finland, Oct. 2025. [SHL 2025 SUMMARY][Foundation models – Task 1]

[9] T. Okita, K. Ukita, A. Miyazaki, et al., “Foundation models to tackle activity recognition in unknown domain: Sussex-Huawei Locomotion Challenge 2025 Task 2,” UbiComp ’25: Companion of the 2025 ACM International Joint Conference on Pervasive and Ubiquitous Computing , 983-991, Espoo, Finland, Oct. 2025. [SHL 2025 SUMMARY – TASK 2][Foundation models – Task 2]